Pattern Matching at Scale with BigQuery's Generative AI - Part 1

The core challenge: From statistical correlation to semantic understanding

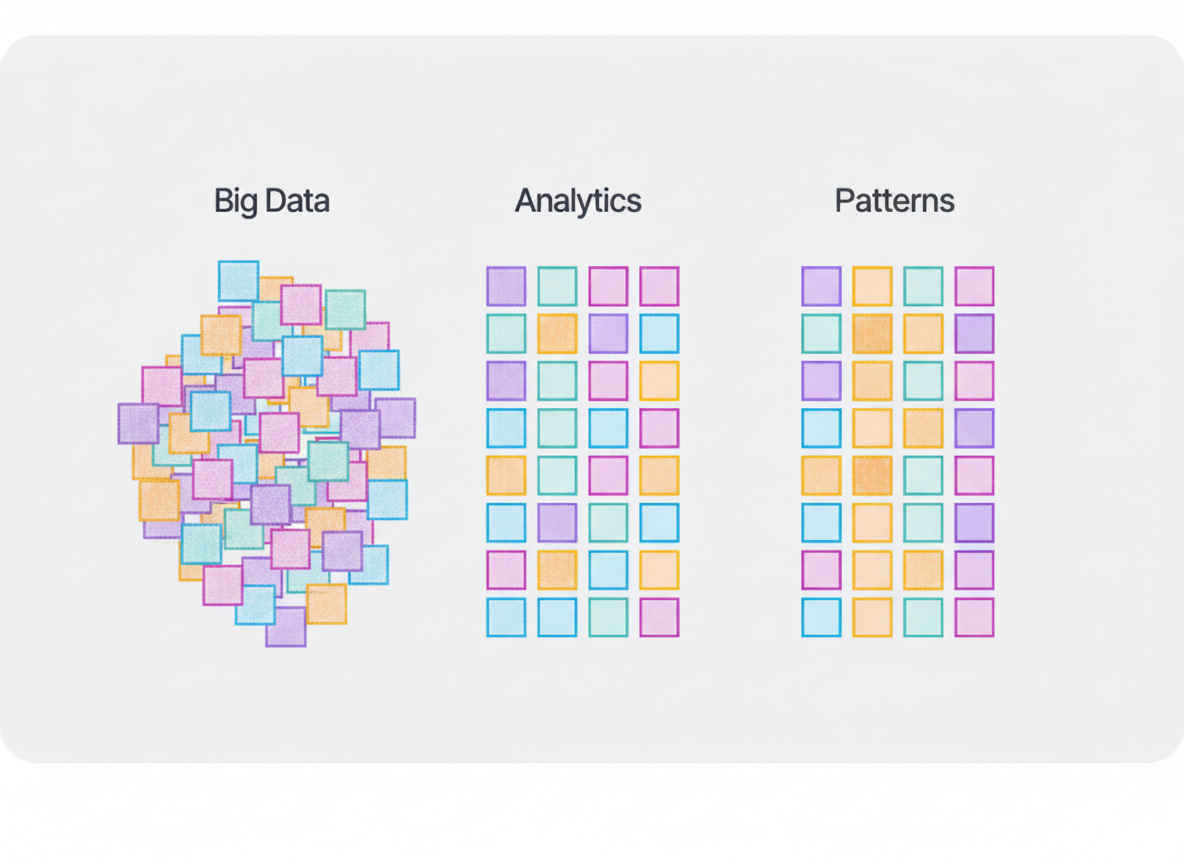

Traditional data analytics excels at finding statistical correlations, calculating averages, detecting outliers, running regressions. But businesses increasingly need something different: the ability to recognize complex patterns in context, understand narrative relationships, and generate hypotheses from incomplete information. This is pattern matching, and it requires semantic understanding rather than just mathematical computation.

Until recently, this type of analysis required either manual review by domain experts or complex custom ML pipelines maintained by specialized teams. Google Cloud BigQuery's integration of generative AI capabilities directly into its SQL environment changes this equation. Functions like ML.GENERATE_TEXT, ML.GENERATE_EMBEDDING, and VECTOR_SEARCH now enable pattern matching at scale through standard SQL queries.

What pattern matching with GenAI actually means

Pattern matching through GenAI differs fundamentally from traditional analytics in three ways:

Contextual Recognition: GenAI understands relationships between concepts, not just numerical correlations. It can recognize that "baseball-sized hail near a shopping center" represents a different risk profile than "baseball-sized hail in rural farmland", something that requires semantic understanding, not statistics.

Narrative Synthesis: GenAI can combine disparate data points into coherent assessments. Instead of generating separate statistics for wind speed, hail size, and population density, it can synthesize these into a unified risk narrative that captures their interactions.

Hypothesis Generation: Rather than only answering predefined questions, GenAI can suggest what questions to ask. It identifies patterns that might warrant investigation, generating hypotheses rather than just calculating probabilities.

The BigQuery advantage: SQL-Native AI Processing

Google Cloud BigQuery's implementation makes these capabilities accessible through standard SQL. This matters for three practical reasons:

Infrastructure Simplicity: No separate ML platforms, no data movement, no complex orchestration. The same BigQuery instance that stores data also processes it through AI.

Skill Accessibility: Data analysts can implement sophisticated pattern matching without learning new programming languages or ML frameworks. A 2021 Gartner survey found IT executives see talent shortage as the most significant adoption barrier to 64% of emerging technologies, though by 2022, 72% of executives reported they have or can source the AI talent they need. BigQuery's SQL approach helps address remaining skill gaps.

Scale Economics: BigQuery uses on-demand pricing of data processed, making large-scale pattern matching economically viable. Processing millions of records for pattern discovery costs the same whether using traditional SQL or AI functions.

Demonstrating the Approach: NOAA weather data as test case

To demonstrate these capabilities, we've built a pilot system that processes 75 years of NOAA severe weather data for insurance risk assessment. The database currently contains data from January 1950 to April 2025, as entered by NOAA's National Weather Service (NWS). This dataset provides an ideal test case because it combines:

Structured numerical data (wind speeds, damage estimates, geographic coordinates)

Unstructured text (event descriptions, observer comments, preliminary reports)

Real business value (insurance companies need better catastrophe risk assessment)

Verifiable outcomes (historical weather events have known insurance impacts)

The pilot, called CLARIS (County-Level Augmented Risk Intelligence System), shows how BigQuery's GenAI functions can transform raw weather observations into actionable risk intelligence. McKinsey estimates that AI technologies could add up to $1.1 trillion in annual value for the global insurance industry, the approach demonstrated here contributes to capturing that value.

What this article demonstrates

Through the CLARIS implementation, we'll show:

How to prepare data for GenAI pattern matching, creating narrative structures that large language models can effectively process

How to implement pattern matching pipelines in BigQuery, using ML.GENERATE_TEXT for narrative generation, AI.GENERATE_TABLE for structured hypothesis generation, ML.GENERATE_EMBEDDING for semantic fingerprinting, and VECTOR_SEARCH for pattern discovery.

How pattern matching complements traditional analytics, augmenting rather than replacing statistical methods

How to maintain rigor and traceability, ensuring AI-generated insights remain auditable and explainable

Each technical chapter includes actual SQL queries that readers can adapt for their own use cases. The goal isn't just to showcase what's possible, but to provide a practical template for implementing pattern matching in any domain where understanding context and relationships matters as much as calculating statistics.

Chapter 1.1: Historical risk master table building memory for pattern recognition

The Foundation: Why historical aggregation enables pattern matching

Pattern matching requires context, and context requires memory. When GenAI analyzes risk patterns, it needs more than isolated data points, it needs the full historical narrative of how risks have evolved over time. The Historical Risk Master table creates this structured memory by aggregating 75 years of NOAA storm events at county-month granularity, transforming millions of individual weather records into coherent risk profiles that GenAI can reason over.

This aggregation serves a dual purpose:

First, it creates statistical baselines that anchor GenAI's pattern recognition in empirical reality.

Second, it structures data in a way that mirrors how domain experts naturally think about risk, not as isolated events, but as evolving patterns with seasonal variations, geographic clustering, and temporal trends.

From raw events to risk narratives

The transformation from raw storm records to aggregated risk profiles is crucial for effective GenAI processing. Raw data contains millions of individual storm events, each a discrete observation with its own timestamp, location, and damage assessment. This granular detail, while valuable for statistical analysis, overwhelms GenAI's ability to identify meaningful patterns.

By aggregating at the county-month level, we create a narrative structure that GenAI can effectively process. Each row becomes a chapter in a county's risk story: "In July 2021, Dallas County experienced 15 severe weather events causing $2.3 million in property damage, with tornado activity reaching F3 intensity." This narrative format aligns with how large language models process information, as sequences of related concepts rather than isolated data points.

The aggregation also introduces temporal context through rolling averages and year-over-year comparisons. These metrics help GenAI distinguish between random fluctuations and genuine trend changes, critical for identifying emerging risk patterns that might signal climate change impacts or evolving vulnerability factors.

Enabling semantic understanding through structure

The table's design deliberately bridges structured and unstructured domains. Numerical aggregates (damage totals, event counts, magnitude averages) provide the quantitative foundation, while categorical fields (tornado scales observed, seasonal risk periods) add qualitative context that GenAI can interpret semantically.

The Storm Events Database contains records on various types of severe weather, as collected by NOAA's National Weather Service (NWS), providing ground truth that prevents GenAI hallucination.

Unlike synthetic training data, these records represent actual catastrophes that generated real insurance claims, giving GenAI patterns a solid empirical foundation.

The inclusion of human impact metrics (deaths, injuries) alongside financial damages enables GenAI to understand risk holistically. A county with high property damage but low human impact suggests different risk characteristics than one with similar financial losses but significant casualties, distinctions that pure statistical models might miss but that GenAI can incorporate into its pattern matching.

Scale and performance considerations

Processing 75 years of weather data, millions of individual storm events, demonstrates BigQuery's ability to handle pattern matching at scale. The aggregation query leverages BigQuery's columnar storage and distributed processing to transform vast historical records into manageable risk profiles without data movement or external processing.

This in-database transformation is essential for practical GenAI deployment. BigQuery's on-demand pricing model charges only for data scanned, making it economically feasible to process decades of historical data. The aggregated output, condensed to county-month summaries, becomes the efficient input for subsequent GenAI operations, reducing both processing costs and latency.

Business Value: from Data Points to Risk Intelligence

For insurance applications, this historical aggregation transforms scattered weather observations into actionable risk intelligence. Underwriters can see not just that a county experienced tornadoes, but how tornado patterns have evolved over decades, whether severity is increasing, and how current activity compares to historical baselines.

The rolling metrics and trend indicators prepare data for GenAI's pattern matching capabilities. When ML.GENERATE_TEXT processes these aggregated profiles in later steps, it can generate narratives like: "This county shows a 40% increase in severe weather frequency over the past decade, with particular acceleration in hail events during shoulder seasons, suggesting expanding risk beyond traditional peak months."

This structured historical foundation ensures that GenAI-generated insights remain grounded in empirical reality while enabling the semantic understanding that makes pattern matching valuable.

It's the critical first step in transforming BigQuery from a data warehouse into an intelligent pattern recognition system.

Query for Historical Risk Master Table Creation: https://github.com/ktiyab/CLARIS/blob/c82575f1d30a03ddb061f172430773fdd4dc8b3c/Historical_Risk_Master_Table_Creation.sql

Chapter 1.2: Event reports master table - capturing real-time signals for pattern detection

The nervous system: why Preliminary Reports enable Early Pattern Recognition

While historical data provides the memory for pattern matching, real-time signals act as the nervous system, detecting emerging threats before they become claims. The Event Reports Master table consolidates preliminary storm reports from field observers, creating a unified stream of early warning signals that GenAI can analyze for emerging patterns.

These preliminary reports arrive 30-60 days before insurance claims are filed, providing a critical window for proactive risk assessment. By standardizing reports from multiple sources, official weather stations, trained spotters, and social media observations, the table creates a comprehensive view of current atmospheric activity that traditional models often miss.

Standardizing chaos: from Heterogeneous Reports to Unified Signals

Raw preliminary reports arrive in various formats with different measurement units, severity scales, and quality levels. A tornado report uses the Enhanced Fujita scale (EF0-EF5), hail reports measure size in inches, and wind reports record speed in miles per hour. This heterogeneity prevents effective pattern matching across event types.

The standardization process transforms these diverse inputs into a common framework that GenAI can process holistically.

By mapping all severity measures to normalized patterns categories (MINOR, MODERATE, SEVERE), the system enables cross-type pattern recognition. GenAI can now identify that "severe hail in the morning followed by moderate tornado activity" represents a specific atmospheric pattern, regardless of the underlying measurement scales.

The inclusion of social media indicators adds a crucial dimension often absent from official reports. Comments containing "SOCIAL MEDIA" or "PHOTO" tags signal crowd-sourced intelligence that, while requiring validation, often provides the earliest detection of emerging threats. This multi-source approach mirrors how human analysts naturally gather intelligence, combining official data with field observations.

Quality scoring and temporal clustering

Not all reports carry equal weight. The table implements a quality scoring system that helps GenAI distinguish between verified measurements and unconfirmed observations. Reports from automated weather stations (ASOS) or with photographic evidence receive higher quality scores than unverified social media mentions.

Temporal clustering identifies whether reports are part of larger storm systems. Events occurring within six hours and 50 miles of each other likely represent the same atmospheric disturbance. This clustering helps GenAI recognize storm progression patterns, critical for predicting where risks might materialize next.

The "hours_since_report" metric enables GenAI to weight recent signals more heavily than older ones, crucial for real-time risk assessment. A cluster of severe reports from the past 12 hours suggests active threat conditions requiring immediate attention, while week-old reports provide context but not urgency.

Bridging Historical Context with Emerging Threats

The true value emerges when this real-time signal layer combines with historical baselines. GenAI can now detect anomalies by comparing current activity to historical patterns. A county experiencing tornado reports in typically quiet months, or hail sizes exceeding historical maximums, triggers pattern recognition that pure statistical models might miss.

This early detection capability transforms insurance operations. Instead of waiting for damage assessments, carriers can mobilize resources based on preliminary signals. GenAI might generate alerts like: "Unusual concentration of severe hail reports in counties with high commercial property exposure, pattern resembles the 2019 storm system that generated $500M in claims."

Performance and Scale Considerations

Processing thousands of real-time reports demonstrates BigQuery's ability to handle streaming pattern recognition. The consolidation of multiple report types into a single table eliminates the need for complex joins during GenAI processing, reducing both latency and computational costs.

BigQuery ML now supports text embeddings, allowing for advanced text analysis directly within SQL, making it possible to process the unstructured comments field alongside structured severity metrics.

This unified processing within BigQuery eliminates data movement between systems, crucial for maintaining real-time responsiveness.

Business Impact: From Reactive to Proactive Risk Management

For insurers, this real-time signal integration enables a fundamental shift from reactive claims processing to proactive risk management. Claims adjusters can be pre-positioned based on emerging patterns. Policyholders can receive targeted warnings. Reinsurance positions can be adjusted before losses materialize.

The combination of report standardization, quality scoring, and temporal clustering creates the structured foundation that enables GenAI to detect meaningful patterns in the noise of real-time data. When ML.GENERATE_TEXT processes these signals in subsequent steps, it can identify subtle patterns that human analysts might miss: "Social media chatter about 'green sky' conditions preceded severe hail by 2-3 hours in 73% of cases, potential early warning indicator."

Query for Event Reports Master Table Creation: https://github.com/ktiyab/CLARIS/blob/c82575f1d30a03ddb061f172430773fdd4dc8b3c/Event_Reports_Master_Table_Creation.sql

Chapter 1.3: enriched location master table - creating complete risk narratives for GenAI

The Synthesis: why Comprehensive profiles enable Intelligent Pattern Matching

Pattern matching requires context, and context requires completeness. The Enriched Location Master table represents the culmination of data preparation, synthesizing 75 years of historical patterns with real-time signals to create comprehensive risk narratives that GenAI can interpret holistically. Each county becomes a complete story rather than fragmented data points.

This synthesis is crucial because GenAI processes information contextually, similar to how an experienced underwriter evaluates risk. An underwriter doesn't just look at recent claims or historical averages in isolation, they consider the full picture: historical patterns, recent trends, current activity, and emerging signals. The enriched profiles provide GenAI with this same comprehensive view, enabling nuanced pattern recognition that statistical models alone cannot achieve.

Bridging Temporal Scales: from Decades to Days

The table's design deliberately spans multiple temporal scales, creating a narrative that flows from long-term patterns to immediate threats. The 75-year cumulative totals establish baseline risk characteristics, what's normal for each county over generational timescales. The 5-year recent trends identify whether risks are evolving, potentially signaling climate change impacts or infrastructure changes. The 30-day activity indicators capture current threat levels.

This temporal bridging enables GenAI to distinguish between different types of risk patterns. A county with high historical damage but minimal recent activity suggests past vulnerabilities that may have been mitigated. Conversely, a historically quiet county showing sudden activity spikes warrants immediate investigation. These nuanced distinctions require the full temporal context that the enriched profiles provide.

The volatility metrics add another dimension by measuring not just average risk but risk consistency. Two counties with identical average damages might have vastly different risk profiles, one with steady, predictable losses versus another with rare but catastrophic events. GenAI can incorporate this volatility into its pattern matching, recognizing that high volatility requires different risk management strategies than consistent, moderate losses.

Preliminary Classifications as Hypotheses, Not Conclusions

The risk classifications generated by this table are explicitly labeled as "PRELIMINARY" patterns for investigation, not definitive insurance ratings. This distinction is critical for responsible AI deployment. The classifications represent data-driven hypotheses that GenAI can use as starting points for deeper analysis, not final judgments.

These preliminary patterns serve as attention-focusing mechanisms for GenAI. When processing millions of data points, GenAI needs guidance on where to look first. Counties flagged as "EXTREME_RISK_ZONE" based on objective criteria (cumulative damage exceeding $500M with accelerating trends) warrant different analytical depth than those in "LOW_RISK_ZONE" categories.

The classification logic transparently combines multiple risk dimensions, historical damage, recent acceleration, current activity, and worst-case severity. This multi-factor approach prevents oversimplification while remaining interpretable. GenAI can understand why a county received a particular classification and adjust its analysis accordingly.

Enabling Semantic Risk Understanding

The enriched profiles transform numerical risk metrics into semantic concepts that GenAI can reason about. The "top_damage_event_types" array doesn't just list event types, it creates a risk signature unique to each county. GenAI can recognize that a county dominated by tornado damage requires different risk assessment than one primarily affected by hail, even if total damages are similar.

When two entities are semantically similar, their respective embeddings are located near each other in the embedding vector space, and these enriched profiles provide the rich feature set needed for meaningful semantic similarity. Counties with similar risk profiles, even if geographically distant, can be identified through pattern matching rather than manual categorization.

Scale and Performance Optimization

Processing 75 years of data for over 3,000 U.S. counties demonstrates BigQuery's ability to handle comprehensive risk profiling at scale. The aggregation strategy, pre-computing complex metrics rather than calculating them during GenAI processing, optimizes both cost and performance.

BigQuery's capacity-based pricing model allows predictable costs for query workloads, making it feasible to maintain continuously updated risk profiles. The enriched table structure eliminates complex joins during GenAI operations, crucial for maintaining low latency when generating real-time risk assessments.

Query for Enriched Location Master Table Creation: https://github.com/ktiyab/CLARIS/blob/c82575f1d30a03ddb061f172430773fdd4dc8b3c/Enriched_Location_Master_Table_Creation.sql

Part 1 Summary: The Foundation for Intelligent Pattern Matching

These three master tables: Historical Risk, Event Reports, and Enriched Location, create the structured foundation that enables GenAI to perform sophisticated pattern matching at scale. Together, they transform 75 years of scattered weather observations into coherent risk narratives that bridge past, present, and future.

The aggregation strategy serves a crucial purpose: converting millions of raw data points into thousands of enriched profiles that GenAI can process efficiently. This is data compression with narrative construction, each enriched location profile tells a complete risk story that GenAI can understand, compare, and reason about.

Coming next: Unleashing GenAI's Pattern Matching Power

With our data foundation established, Part 2 will demonstrates how BigQuery's GenAI capabilities transform these structured narratives into actionable intelligence.

The transition from structured data to GenAI processing represents the key mindset: we're not replacing traditional analytics but augmenting it with semantic understanding. The structured foundation ensures GenAI remains grounded in empirical reality, while the pattern matching capabilities surface insights that would be impossible to detect through conventional methods.